The Quiet Revolution in AI Memory

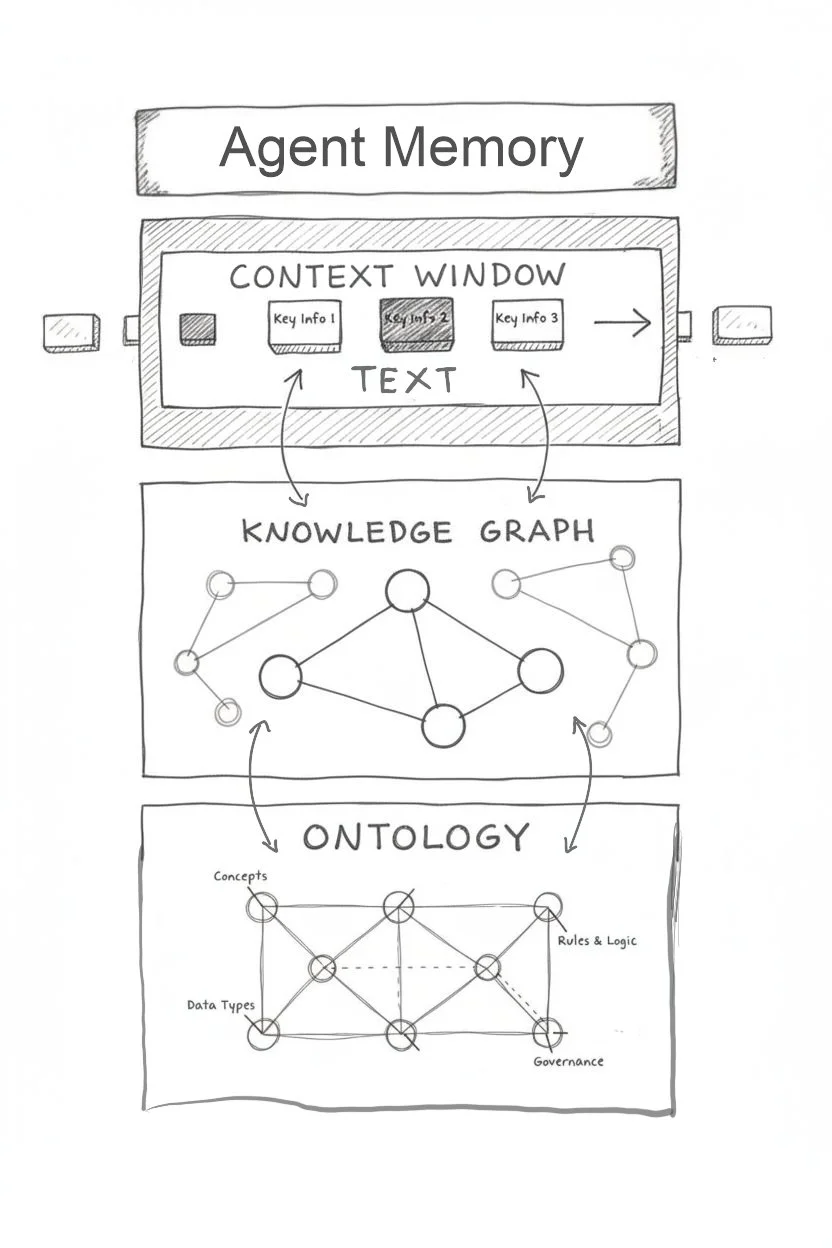

AI’s next breakthrough isn’t better code generation- it’s memory. As models gain the ability to store, compress, and retrieve context over time, tool use plus memory is turning LLMs into agents.

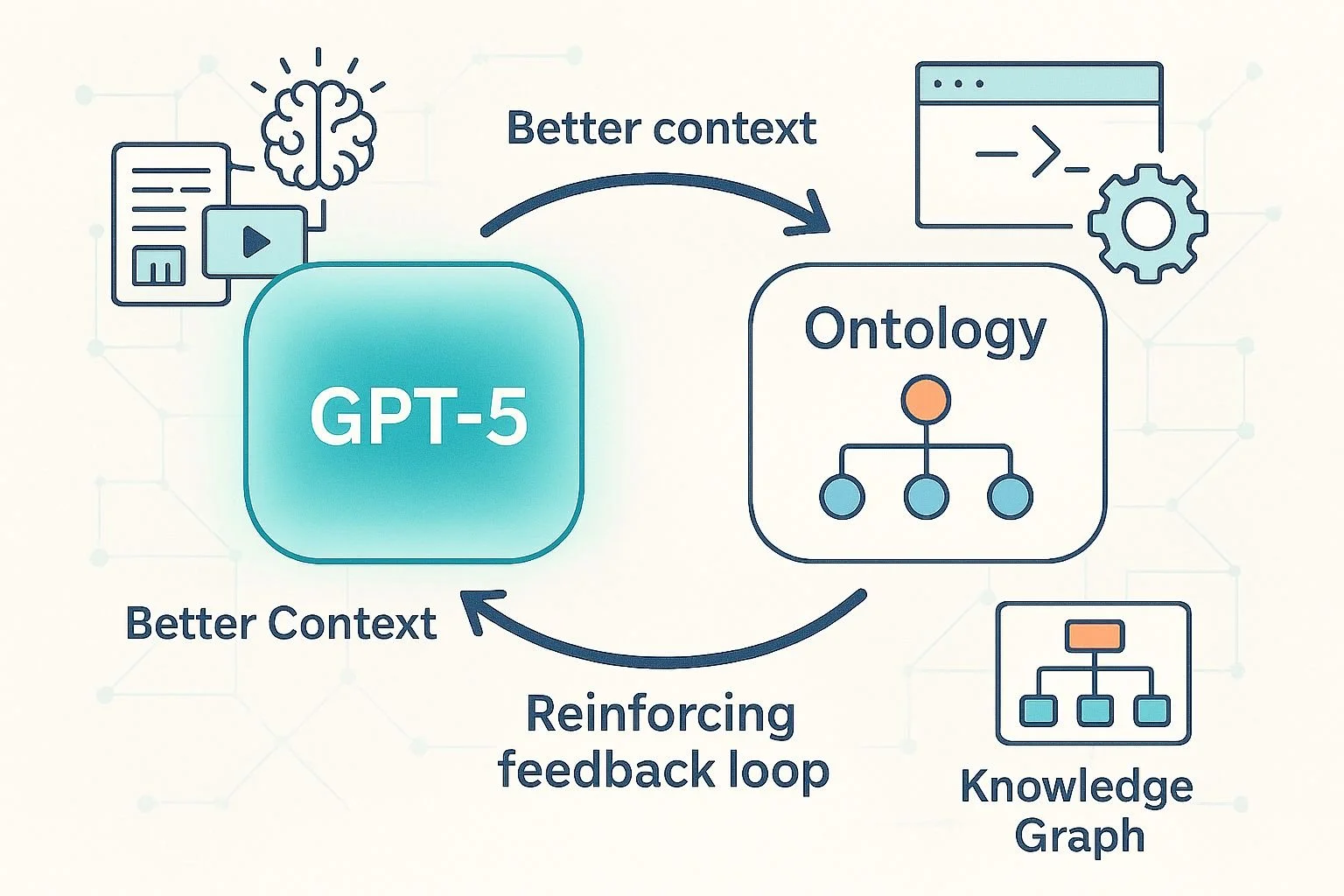

Revisiting the Neural-Symbolic Loop: GPT-5 and Ontologies in Tandem

With GPT-5, the synergy between LLMs and ontologies is clearer than ever. Larger context, multimodal input, and tool use let models help build ontologies — and ontologies, in turn, strengthen LLM reasoning, creating a self-reinforcing loop of improvement.

Reasoning Will Fall

OpenAI’s o3 model has set new highs in significant benchmarks—and that's a game-changer for all of us. If AI can reason, code, and excel in maths and science, it’s only a matter of time before it starts reshaping tasks critical to nearly every business. Let’s dive into how o3 performed on key benchmarks: